DSN-2024: Artifacts Call For Contributions

DSN supports open science, where authors of accepted papers are encouraged to make their tools and datasets publicly available to ensure reproducibility and replicability by other researchers. New this year, DSN 2024 will offer a separate artifact evaluation track to all accepted papers from all three categories of the research track. The goals of the artifact track are to (1) increase confidence in a paper’s claims and results, and (2) facilitate future research via publicly available datasets and tools.

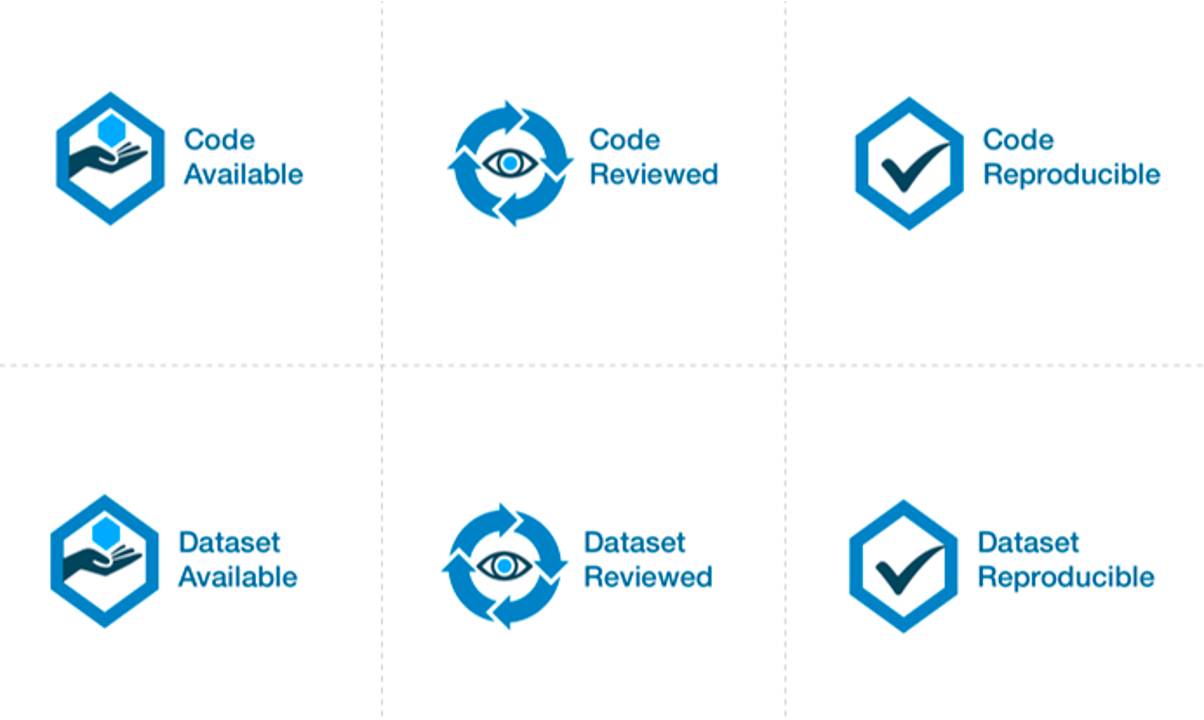

Badges

The availability of artifacts accompanying the papers will be denoted by badges. Badges will appear on the page of the paper on the digital library. Since DSN is an IEEE-sponsored conference, it follows the scheme of the IEEE Xplore digital library (see https://ieeexplore.ieee.org/Xplorehelp/overview-of-ieee-xplore/about-content#reproducibility-badges). Accordingly, DSN will award the following three types of badges:

- Available: The code and/or datasets, including any associated data and documentation, provided by the authors is reasonable and complete and can potentially be used to support reproducibility of the published results.

- Reviewed: The code and/or datasets, including any associated data and documentation, provided by the authors is reasonable and complete, runs to produce the outputs described, and can support reproducibility of the published results.

- Reproducible: This badge signals that an additional step was taken or facilitated to certify that an independent party has regenerated computational results using the author-created research objects, methods, code, and conditions of analysis. Reproducible assumes that the research objects were also reviewed.

The “Reviewed” badge implies that the artifact also qualifies for the “Available” badge. The “Reproducible” badge subsumes both the “Reviewed” and “Available” badges. Authors can apply for all of the three types (i.e., the artifact is Available, Reviewed, and Reproducible).

Artifacts can be Code or Datasets. The same research paper can be accompanied by both Code and Datasets.

IEEE Xplore also allows a fourth type of badge (“Replicated”). This fourth badge is only for replication studies performed by other authors, and will not be awarded as part of this artifact evaluation process.

Artifact Submissions

At the time of the submission, authors must indicate (1) whether they intend to submit an artifact for their submission, (2) the type of artifact (code, dataset, or both), (3) a DOI reserved for the artifact on an open-access repository (Zenodo or Figshare), and (4) the badge(s) they are applying for.

Please note that we require that the artifact should be submitted either through Zenodo (https://zenodo.org/) or Figshare (https://figshare.com/). They are two very popular open-access repositories adopted by computer science conferences, which assure long-term archival storage.

These repositories can provide a DOI, i.e., a fixed, persistent identifier for the artifact, that provides a more stable link than directly using an URL. Please note that the DOI of the artifact should be indicated at the time of paper submission for the research track, even if the artifact is not yet ready. Both Zenodo and Figshare allow users to reserve a DOI, and to upload the actual artifact at a later moment. The DOI will become reachable when the artifact is published. For more information about how to reserve a DOI, please see the following tutorials:

- Zenodo tutorial - How to use and upload your research - YouTube (https://www.youtube.com/watch?v=BPVSErzNtME)

- Figshare support - How to reserve a DOI - YouTube (https://www.youtube.com/watch?v=3J6AuHT3ds8)

Please note that artifacts should not be submitted through GitHub or other software development platforms. Of course, you are free to also share a copy of your artifact through these platforms, but we require that the artifact is submitted and shared through Zenodo or Figshare for long-term archival storage and better interoperability.

We reiterate that the artifact does not need to be submitted at the same time as the paper. The artifact can be uploaded at the reserved DOI after the paper submission deadline, and can be updated until the artifact submission deadline. Information about the artifact submission and its review will not be shared with the PC of the research track.

Artifacts should be submitted with a license that allows researchers to reuse and to extend the artifact (e.g., for comparison purposes in a future paper). The license can be indicated through metadata on the open-data repository, and through a file included in the artifact (e.g., LICENSE.txt). Creative Commons licenses are a typical choice for open data.

Please find below the deadline for finalizing artifacts among the important dates. By that date, the authors should submit a dedicated form to inform us that the artifact has been actually submitted. See below for more information about artifact submission.

Artifact Evaluation Process

The artifacts will be evaluated by a dedicated Artifacts Evaluation (AE) committee through a single-blind review process, where authors should be available to respond quickly during the artifact evaluation.

The artifact evaluation process is restricted to accepted papers in the research track of DSN (including PER and Tool papers). The evaluation will begin after the review process is complete and acceptance decisions have been made by the research track PC. The research PC chairs will make the submitted paper available to the Artifact Evaluation committee. The information about the artifact evaluation is NOT shared with the research PC in any form.

Evaluation starts with a “kick-the-tires” period, during which evaluators ensure they can access their assigned artifacts and perform basic operations such as building and running a minimal working example. During the kick-the-tires period, the committee can communicate with the authors (anonymously through the submission platform) to give early feedback about the artifact, giving authors the option to address any significant blocking issues. After the kick-the-tires stage ends, communication can only address interpretation concerns for the produced results or minor syntactic issues in the submitted materials.

We recommend authors to present and document artifacts in a way that the evaluation committee can use it and complete the evaluation successfully with minimal (and ideally no) interaction. To ensure that your instructions are complete, we suggest that you run through them on a fresh setup prior to submission, following exactly the instructions you have provided.

We expect that most evaluations can be done on any moderately-recent desktop or laptop computer. In other cases and to the extent possible, authors have to arrange their artifacts so as to run in community research testbeds or will provide remote access to their systems (e.g., via SSH) with proper anonymization. If the artifact is aimed at full reproducibility of results, but they take a long time to obtain (e.g., because of a large number of experiments, such as in fault injection), authors should provide a shortcut or sampling mechanism.

Distinguished Artifact Award

All artifacts submitted will compete for a “Distinguished Artifact Award” that is sponsored by KAUST (https://www.kaust.edu.sa/), to be decided by the committee. This will be awarded to the artifact that (1) has the highest degree of reproducibility as well as ease of use and documentation, (2) allows other researchers to easily build upon the artifact’s functionality for their own research, and (3) substantially supports the claims of the paper. We anticipate that at most one artifact (paper) would get the award, though the committee reserves the right not to award any artifact in a given year if none of them meet the criteria for the award.

Important dates:

In the following list, the important dates for the Artifact Evaluation (AE) are reported. Dates refer to AoE time (Anywhere on Earth).

- December 6, 2023: Research paper submissions (including reserved DOI for the artifact)

- March 5, 2024: Deadline for populating the repository with artifact

- March 15, 2024: AE bidding

- March 21, 2024: Assignment of AE reviewers

- March 22 - 31, 2024: First phase of AE (kick the tires)

- April 1 - 20, 2024: Second phase of AE

- April 21 - 25, 2024: Final badges assignment + Distinguished artifact selection

- April 29, 2024: Author notification for the AE track

Volunteering for the Artifact Evaluation Committee

AEC members will contribute to the conference by reviewing companion artifacts of papers accepted in the research track. We invite early-career researchers to join the AEC, including PhD students (e.g., in the second or third year of their studies), who are working in any topic area covered by DSN, see the Research Track Call for Contributions. Participating in the AEC will allow you to familiarize yourself with research papers accepted for publication at DSN 2024, and to support experimental reproducibility and open-science practices.

For a given artifact, you will be asked to evaluate its public availability, functionality, and/or ability to reproduce the results from the paper. You will be able to discuss with other AEC members and anonymously interact with the authors as necessary, for instance if you are unable to get the artifact to work as expected. Finally, you will provide a review for the artifact to give constructive feedback to its authors, discuss the artifact with fellow reviewers, and help award the paper artifact evaluation badges. You can be located anywhere in the world as all committee discussions will happen online.

We expect that each member will evaluate 1-2 artifacts, and that each evaluation will take around 10–20 hours. AEC members are expected to allocate time to bid for artifacts they want to review, to read the respective papers, to evaluate and review the corresponding artifacts, and to be available for online discussion (if needed). Please ensure that you have sufficient time and availability (see also the indicative important dates). Please also ensure you will be able to carry out the evaluation confidentially and independently, without sharing artifacts or related information with others and limiting all the discussions to within the AEC.

Conflicts of Interest

Authors and AEC members are asked to declare potential conflicts during the paper submission and reviewing process. In particular, a conflict of interest must be declared under any of the following conditions: (1) anyone who shares an institutional affiliation with an author at the time of submission, (2) anyone the author has collaborated or published within the last two years, (3) anyone who was the advisor or advisee of an author, or (4) is a relative or close personal friend of the authors. For other forms of conflict and related questions, authors must explain the perceived conflict to the track chairs.

AEC members who have conflicts of interest with a paper, including program co-chairs, will be excluded from any discussion concerning the paper.

Guidelines for submitting/reviewing artifacts

As guidance for submitting your artifact, please consider the following points. The Artifact Evaluation Committee will also consider these points to assign badges.

For the "Available" badges:

- Is the artifact publicly available through an open-access repository (Zenodo or Figshare)?

- Is the artifact consistent and complete with respect to the paper?

- Does it provide sufficient user documentation (e.g., command-line syntax)?

- Can it potentially be used to support reproducibility of the paper (even if you could not run the artifact)?

- Does it include a license that allows researchers to reuse and extend the artifact (e.g., for comparison purposes in a future paper)? Creative Commons licenses are a typical choice for open data.

For the "Reviewed" badges:

- Does the artifact include enough documentation about configuration and installation (e.g., on external dependencies, supported environments)?

- Does it include instructions for a "minimum working example", and could you run it?

- Does the artifact include documentation about its internals (e.g., organization of modules and folders, code comments for explaining non-obvious code) that is understandable for other researchers?

For the "Reproducible" badges:

- Does the documentation of the artifact explain which claims of the paper it can reproduce, and how to reproduce them?

- Can you run the analysis automatically, with a reasonable effort and time/resource requirements?

- Do the results of the execution support the claims of the paper?

Additional suggestions:

- You are allowed to provide your artifact as a virtual machine. Even in that case, you should still provide source code and scripts that were used to build the virtual machine.

- Please minimize the number of dependencies and the amount of hardware resources needed to run the artifact.

- Please provide clear step-by-step instructions to install and run the artifacts. Remember to test them on a clear environment!

- When providing instructions to users and reviewers, please provide the expected outputs (or any other side effect) of these instructions, and the estimated amount of human and compute time.

See also:

- Artifact Evaluation Guidelines for DSN AEC

- Artifact Evaluation: Tips for Authors – Primordial Loop (padhye.org)

- HOWTO for AEC Submitters - Documenti Google

Submissions

To submit your artifact for evaluation, please fill out the form on HotCRP at the following address: https://dessert.unina.it:9001/. You will be asked to provide:

- Information about your DSN'24 Research Track submission;

- The DOI with your artifact (should point to Zenodo or Figshare);

- The badges you are applying for.

Please note that you may be contacted by the Artifact Evaluation Committee through HotCRP (e.g., during the "kick-the-tires" phase). HotCRP will send you email notifications about incoming messages, from the address "dsn24artifacts@unina.it". You may consider to white-list this address in your spam filter.

Acknowledgements

We are grateful to the Artifact Evaluation Committees of previous conferences in Systems Research (https://sysartifacts.github.io/) and Security Research (https://secartifacts.github.io/) for kindly sharing their previous experience with the evaluation process.

Contact:

For more information or any issue, please contact the Artifact Evaluation Chairs at dsn24artifacts@unina.it

- Roberto Natella, Università degli Studi di Napoli Federico II, Italy

- Karthik Pattabiraman, University of British Columbia, Canada